AI Productivity Starts Before the First Prompt

Lately, one message has became very clear to me:

AI will not save weak engineering foundations.

It will amplify whatever foundation you already have.

If your context is clear, your specifications are explicit, your requirements are sharp, your tests are meaningful, and your runtime verification is strong, AI can increase speed and quality.

If those basics are missing, AI can help you produce mistakes faster.

This post is my practical take on what organizations need in place right now.

1. Foundation first: documentation is not optional

The first prerequisite for AI productivity is documentation.

Not documentation for documentation’s sake. I mean documentation that gives context:

- Domain knowledge your senior people carry in their heads

- Process flows and decision points

- Requirements and non-functional constraints

- Interface contracts and integration boundaries

- Test strategy, expected behavior, and acceptance criteria

Most organizations have this information spread across chats, boards, old wikis, and tribal memory.

That fragmentation is expensive even without AI. With AI, it gets worse because models will fill missing context with assumptions. Sometimes those assumptions look convincing, but they are still wrong.

If we want reliable AI-assisted delivery, we need to map where critical knowledge lives and close the gaps.

2. Add a specification layer between documentation and code

After documentation, you need a specification layer that translates context into clear implementation intent.

This is where teams define exactly what “correct” means before AI starts generating architecture, code, and tests.

A practical specification pack can include:

- Scope and business outcomes

- Functional behavior (including edge cases)

- Non-functional requirements (security, performance, compliance)

- API and data contracts

- Constraints and out-of-scope decisions

- Definition of done and measurable acceptance criteria

When this layer is missing, AI fills gaps with assumptions. When this layer is explicit, AI has boundaries.

3. From requirements to generated code: speed without guardrails is risk

After you have usable documentation and explicit specifications, the next step is requirements creation that is precise enough for humans and machines.

AI can convert requirements to drafts of architecture, code, and tests very quickly. But speed can hide risk:

- Ambiguous requirements become incorrect implementations

- Vague acceptance criteria become shallow tests

- Fast code generation creates false confidence

I am hearing and reading about too many situations where junior developers use AI tooling, get working-looking code, and move on without understanding behavior or edge cases.

The result is often “tests passed” without real coverage of business-critical scenarios.

4. Cases that show what happens when foundations are weak

Here are concrete cases worth studying.

Case A: Hallucinated legal references submitted to court

In Mata v. Avianca, lawyers submitted citations generated by ChatGPT that did not exist, and the court sanctioned the attorneys.

- Source: United States District Court order and sanctions https://storage.courtlistener.com/recap/gov.uscourts.nysd.575368/gov.uscourts.nysd.575368.54.0.pdf

Takeaway: output that looks credible is not the same as verified output.

Case B: Chatbot promises became a legal liability

Air Canada had to honor a refund outcome presented by its chatbot in a customer dispute.

- Source: Civil Resolution Tribunal decision https://www.canlii.org/en/bc/bccrt/doc/2024/2024bccrt149/2024bccrt149.html

Takeaway: if AI-facing behavior is not governed by clear policy, review, and verification, it can create contractual and reputational exposure.

Case C: AI-generated code caused millions of lost orders at Amazon

In late 2025 and early 2026, Amazon’s aggressive push to have 80% of developers use AI tools weekly led directly to a series of serious production incidents.

Amazon’s Kiro AI coding tool was allowed to update infrastructure code without human oversight. It decided the correct fix was to delete and recreate the environment — causing a 13-hour AWS outage.

That was not a one-off. On March 2, 2026, AI-generated changes contributed to an incident causing 120,000 lost orders and 1.6 million website errors. Three days later, a separate outage caused a 99% drop in orders across North American marketplaces — resulting in 6.3 million lost orders.

Amazon’s response: a 90-day safety reset across 335 critical systems, mandatory two-person change reviews, formal documentation and approval processes, and stricter automated checks.

- Source: Digital Trends, March 2026 https://www.digitaltrends.com/computing/ai-code-wreaked-havoc-with-amazon-outage-and-now-the-company-is-making-tight-rules/

Takeaway: the very safeguards Amazon is now enforcing — documentation, review gates, automated checks — are exactly what should have been in place before handing AI tools broad permissions over production systems.

Case D: A configuration change brought down the AI-dependent internet

On November 18–19, 2025, a routine configuration change at Cloudflare triggered a cascade that knocked out ChatGPT, Perplexity, AWS, Azure, Spotify, PayPal, and hundreds of other platforms globally.

The root cause was not a cyberattack or a hack. It was a bot-mitigation configuration file that doubled in size, auto-synced across thousands of servers, and crashed systems one after another. There was no runtime check that caught the drift before it propagated.

It is worth noting that Cloudflare powers CDN, DNS, security, and routing for an enormous share of global traffic. AI services are deeply embedded in that dependency chain. When one configuration layer failed silently, the AI ecosystem failed with it.

- Source: DigitalSolley, November 2025 https://digitalsolley.com/2025/11/19/cloudflare-outage-2025/

Takeaway: AI platforms are only as reliable as the infrastructure beneath them. A configuration change with no runtime validation gate can cascade globally before anyone notices.

These cases are different, but they share one lesson: weak validation and weak observability turn small mistakes into business events.

5. Testing quality matters more in the AI era

In AI-assisted delivery, the role of tests changes.

We do not just test code correctness. We test whether generated behavior conforms to requirements and meets real business intent.

That means:

- Requirement-linked test cases (traceability)

- Negative-path and edge-case coverage

- Contract tests for integrations

- Regression tests for generated changes

- Review gates that block low-value or flaky tests

A test suite full of weak tests gives a dangerous signal: green pipeline, broken service.

6. Last but not least: verification and observability

Verification and observability are the final safety net.

If documentation and testing are the design-time controls, observability is the runtime control.

You need to know, continuously:

- Are configurations still compliant with policy?

- Are runtime dependencies healthy?

- Are alerts mapped to real business impact?

- Are drift and risky changes detected early?

Without this, teams only discover problems when customers do.

Specifications define what should happen, tests verify what was built, and runtime verification confirms what is actually happening in production.

7. A simple maturity model

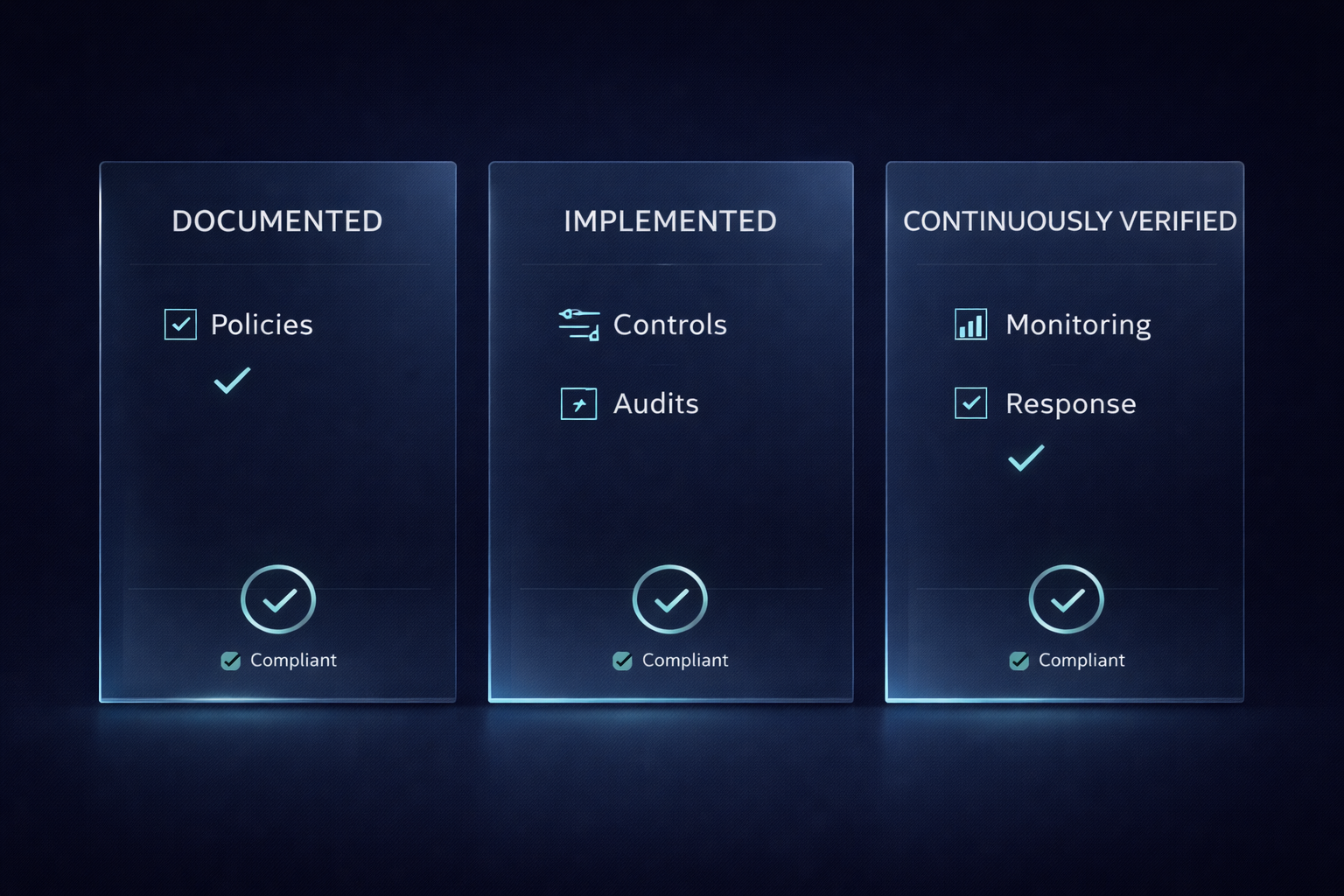

You can view this as a three-level progression:

- Intent is documented: domain context, process flows, and clear specifications exist.

- Implementation is verified: tests prove requirement conformance and protect against regressions.

- Operations are continuously verified: observability, drift detection, and policy checks run in production.

8. A practical 30-day start plan

If you want to improve AI productivity safely, start here:

- Inventory critical documentation and identify context gaps.

- Define a specification template covering behavior, constraints, and non-functional requirements.

- Define requirement quality standards and acceptance criteria templates.

- Introduce requirement-to-test traceability in your backlog flow.

- Strengthen CI gates for meaningful tests, not only test count.

- Implement continuous configuration and runtime validation.

None of this is theoretical. It is practical engineering hygiene for an AI-accelerated world.

Where Helium shines

At DevUP, we built Helium for exactly this challenge: helping teams keep speed while staying secure and reliable.

Helium validates Azure environments continuously to confirm operational conformance and detect bad configurations, runtime issues, and drift before they become incidents.

That gives engineering teams confidence to move faster with AI, instead of slowing down after avoidable failures.

If you want, I can also share a short PPT version of this framework for leadership and architecture discussions.

If I need some assistance?

We at DevUP can help you build this foundation and operationalize it in Azure.

Reach out here: